Speech recognition module for react native using Vosk library

npm install -S react-native-voskVosk uses prebuilt models to perform speech recognition offline. You have to download the model(s) that you need on Vosk official website Avoid using too heavy models, because the computation time required to load them into your app could lead to bad user experience. Then, unzip the model in your app folder. If you just need to use the iOS version, put the model folder wherever you want, and import it as described below. If you need both iOS and Android to work, you can avoid to copy the model twice for both projects by importing the model from the Android assets folder in XCode.

Experimental: Loading a model dynamically into the app storage, aside from the main bundle is a new and experimental feature. Would love for you all to test, and let us know if it is a viable option. If you choose to download a model to your app’s storage (preferably internal), you can pass the model directory path when calling vosk.loadModel(path).

To download and load a model as part of an app's Main Bundle, just do as follows:

Starting from version 1.0.0, the model is not searched in the android project by default anymore.

In your root project folder, create a folder assets (next to the src one) and put your model folder in it. The path should be like this: assets/model-en-en if you downloaded the english model for example.

Important: The model folder must be directly in the assets folder, not in a subfolder. If you have multiple models, you can put them all in the assets folder like this:

model-en-en/

model-fr-fr/

model-de-de/

You can import as many models as you want. If your model folder does not start with model-, you won't be able to load it. The model folder must contain all the files provided in the zip you downloaded from Vosk website. If you don't have the assets folder in your project, just create it.

If you have any trouble, double check you are following the naming convention, or check the example project provided with the library : example

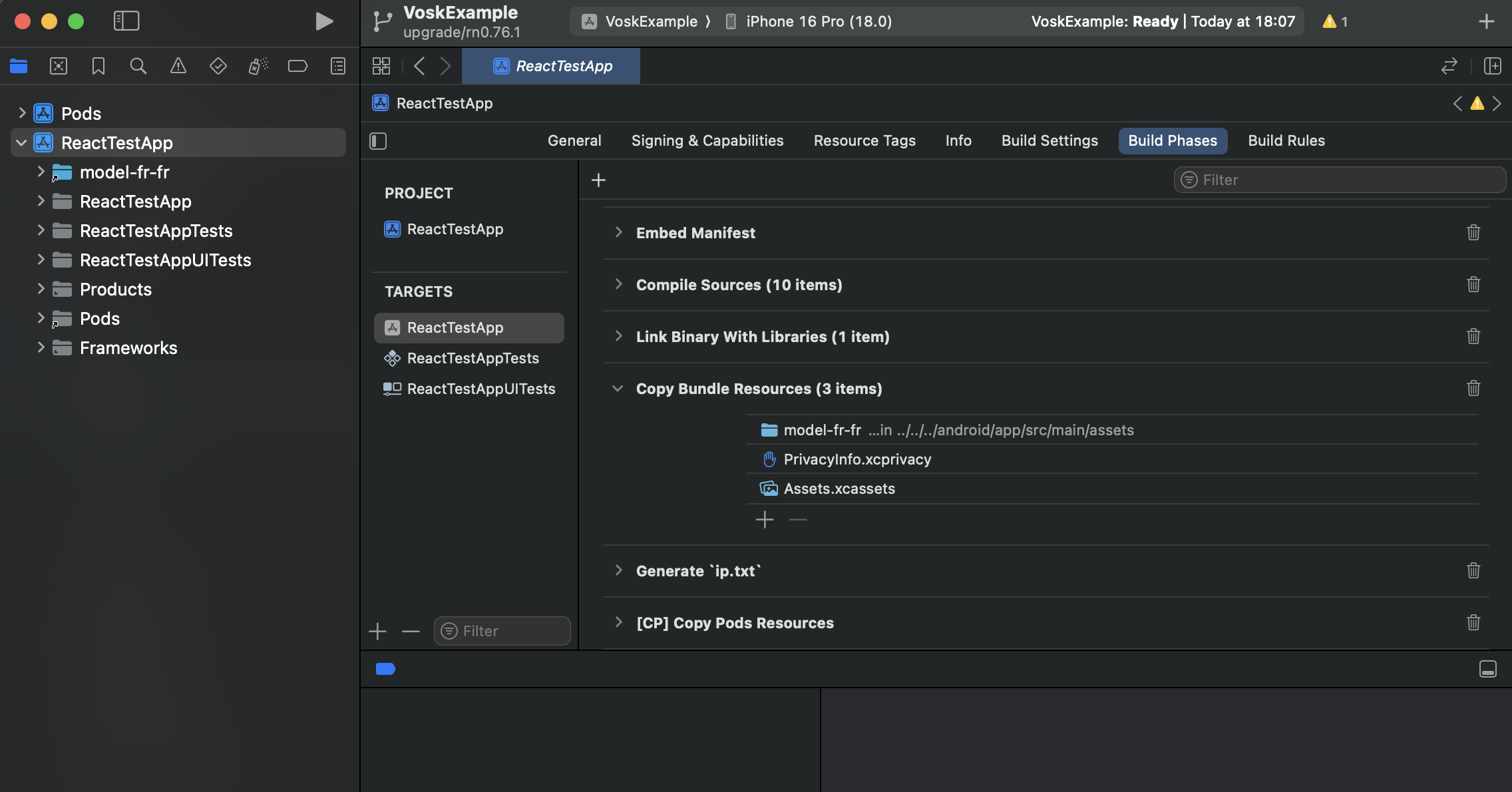

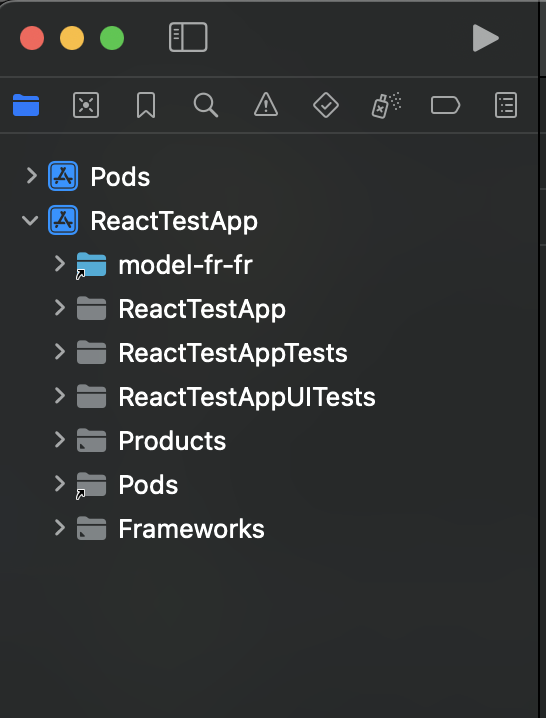

In XCode, click on your App on the projects pannel, then go to the Build phases tab of settings pannel. Scroll down to the Copy bundle resources accordion. Click on the + button at the end of the list. Click on the Add other... button at the bottom of the prompt window.

Then navigate to your model folder. You can navigate to the assets folder you may have created for android, and chose your model here. It will avoid to have the model copied twice in your project. If you don't use the Android build, you can just put the model wherever you want, and select it. Click on Open.

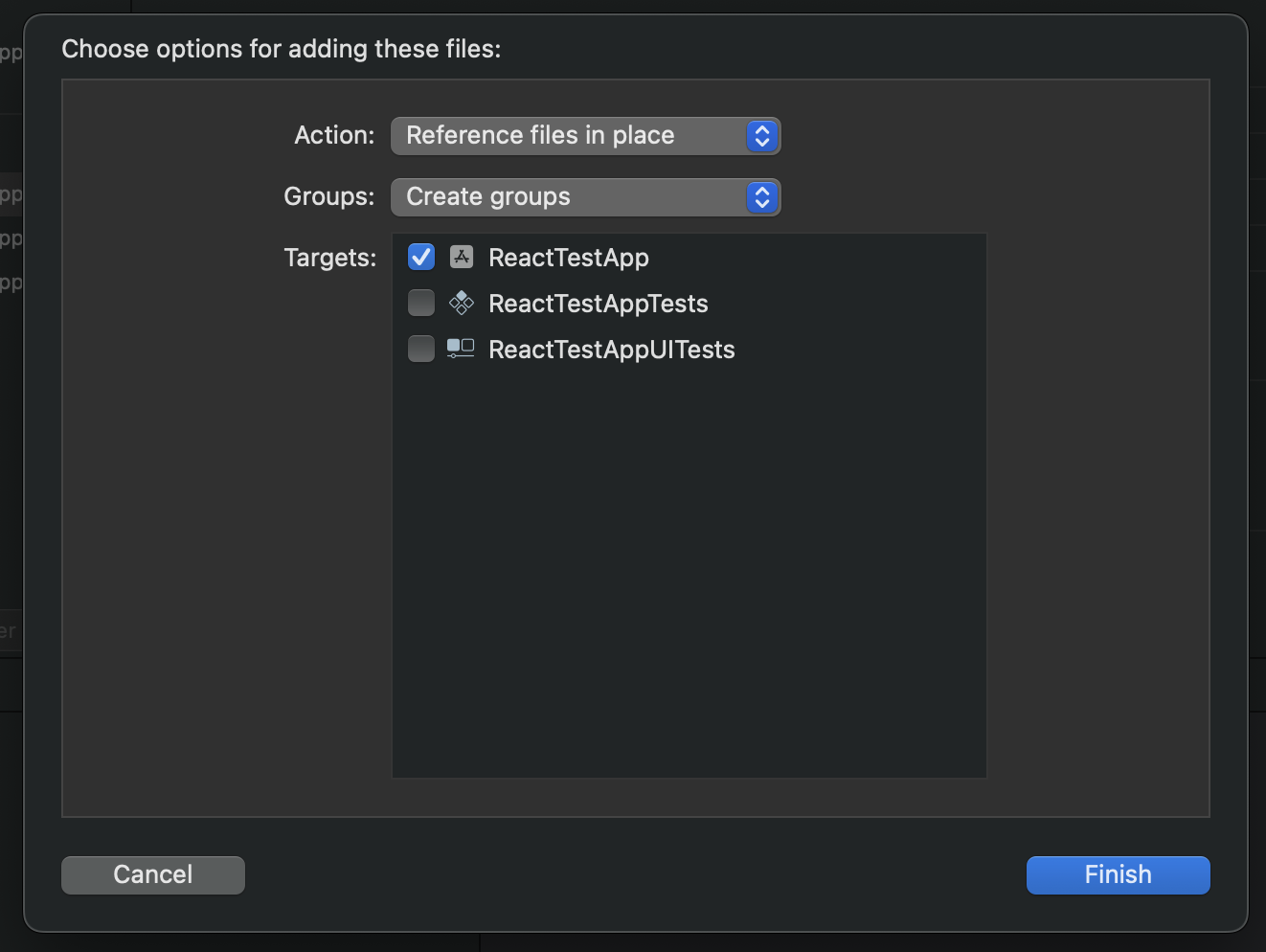

Check Copy items if needed. If you want to avoid having the model living twice in your app folders in order to reduce your bundle size, select Create folder references. That's all. The model folder should appear in your project. When you click on it, your project target should be checked (see below).

Microphone permission

Don't forget to add the microphone permission to your Info.plist file if you haven't already:

<key>NSMicrophoneUsageDescription</key>

<string>We need access to your microphone for speech recognition</string>Or in XCode, open your Info.plist file, hover the last line and click on the + button that appears. Select Privacy - Microphone Usage Description in the dropdown list. In the value field, enter a message that will be displayed to the user when the system asks for microphone permission.

This library ships with an optional Expo config plugin so you can use it in managed or prebuild workflows.

Add the plugin in your app.json (or app.config.js):

{

"expo": {

"plugins": [

[

"react-native-vosk",

{

"models": ["assets/model-fr-fr", "assets/model-en-en"],

"iOSMicrophonePermission": "Nous avons besoin d'accéder à votre microphone"

}

]

]

}

}Options (all optional):

models: Array of relative paths (from project root) to model folders. They will:- On iOS: be copied into the Xcode project root and added as resources.

- On Android: be passed as a Gradle property (

Vosk_models) so UUID generation copies them into the library's generated assets. Ensure each folder is a valid Vosk model directory.

iOSMicrophonePermission: String used forNSMicrophoneUsageDescription.

Notes:

- If

modelsis omitted, Android falls back to legacy scanning of an adjacentassetsfolder formodel-*directories (bare workflow behavior). - Bare React Native users can continue to integrate models manually; Expo is not required.

- After changing plugin config run

npx expo prebuild(orexpo prebuild -p ios|android) to regenerate native projects.

import Vosk from 'react-native-vosk';

// ...

const vosk = new Vosk();

vosk

.loadModel('model-en-en')

.then(() => {

const options = {

grammar: ['left', 'right', '[unk]'],

};

vosk

.start(options)

.then(() => {

console.log('Recognizer successfuly started');

})

.catch((e) => {

console.log('Error: ' + e);

});

const resultEvent = vosk.onResult((res) => {

console.log('A onResult event has been caught: ' + res);

});

// Don't forget to call resultEvent.remove(); to delete the listener

})

.catch((e) => {

console.error(e);

});Note that start() method will ask for audio record permission.

- Primarily intended for models that are not included in the app’s Main Bundle.

- Use a file system package to download and store a model from remote location

- react-native-file-access is one that we found to be stable, but this is a personal preference based on use

import Vosk from 'react-native-vosk';

// ...

const vosk = new Vosk();

const path = 'some/path/to/model/directory';

vosk

.loadModel(path)

.then(() => {

const options = {

grammar: ['left', 'right', '[unk]'],

};

vosk

.start(options)

.then(() => {

console.log('Recognizer successfuly started');

})

.catch((e) => {

console.log('Error: ' + e);

});

const resultEvent = vosk.onResult((res) => {

console.log('A onResult event has been caught: ' + res);

});

// Don't forget to call resultEvent.remove(); to delete the listener

})

.catch((e) => {

console.error(e);

});| Method | Argument | Return | Description |

|---|---|---|---|

loadModel |

path: string |

Promise<void> |

Loads the voice model used for recognition, it is required before using start method. |

start |

options: VoskOptions or none |

Promise<void> |

Starts the recognizer, an onResult() event will be fired. |

stop |

none |

none |

Stops the recognizer. Listener should receive final result if there is any. |

unload |

none |

none |

Unloads the model, also stops the recognizer. |

| VoskOptions | Type | Required | Description |

|---|---|---|---|

grammar |

string[] |

No | Set of phrases the recognizer will seek on which is the closest one from the record, add "[unk]" to the set to recognize phrases striclty. |

timeout |

int |

No | Timeout in milliseconds to listen. |

| Method | Promise return | Description |

|---|---|---|

onPartialResult |

The recognized word as a string |

Called when partial recognition result is available. |

onResult |

The recognized word as a string |

Called after silence occured. |

onFinalResult |

The recognized word as a string |

Called after stream end, like a stop() call |

onError |

The error that occured as a string or exception |

Called when an error occurs |

onTimeout |

void |

Called after timeout expired |

vosk.start().then(() => {

const resultEvent = vosk.onResult((res) => {

console.log('A onResult event has been caught: ' + res);

});

});

// when done, remember to call resultEvent.remove();vosk

.start({

grammar: ['left', 'right', '[unk]'],

})

.then(() => {

const resultEvent = vosk.onResult((res) => {

if (res === 'left') {

console.log('Go left');

} else if (res === 'right') {

console.log('Go right');

} else {

console.log("Instruction couldn't be recognized");

}

});

});

// when done, remember to call resultEvent.remove();vosk

.start({

timeout: 5000,

})

.then(() => {

const resultEvent = vosk.onResult((res) => {

console.log('An onResult event has been caught: ' + res);

});

const timeoutEvent = vosk.onTimeout(() => {

console.log('Recognizer timed out');

});

});

// when done, remember to clean all listeners;See the contributing guide to learn how to contribute to the repository and the development workflow.

MIT